Thanks to ChatGPT, understanding the EU Digital Identity Wallet was as swift as a blockchain transaction, yet again.

Since its inception, the World Wide Web has undergone significant transformations. Web 1.0, although rudimentary and static by today’s standards, was a fertile ground for creativity and development, fostering fair competition and innovation.

The rise of mobile phones and social media in the early 2000s, hallmarks of the Web 2.0 era, marked a pivotal shift. End-users transformed from passive consumers into active content creators, but this came at the cost of their privacy. Major tech firms—collectively known as Magma (Microsoft, Apple, Google, Meta, and Amazon)—dominated personal data control.

To counter this centralization, recent years have seen a shift toward decentralized technologies like blockchain, encrypted peer-to-peer communication, and digital wallets. This shift emphasizes decentralization, privacy, and end-user control, echoing Web 1.0’s pioneering spirit.

A key element of Web 3.0 is the digital wallet, extending beyond financial transactions to include credential authentication, such as age verification for alcohol purchases. The European Commission has approved the European Union Digital Identity Wallets proposal, which will hold digital documents like passports and driver’s licenses, open bank accounts, and make payments.

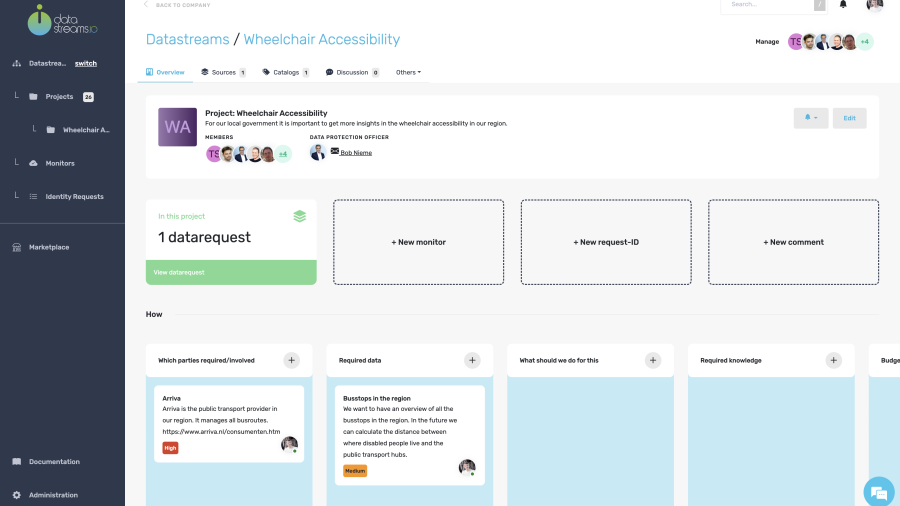

This article examines how this initiative promotes self-sovereign identity and privacy. It starts by explaining universal wallets and explores the EU’s proposal and its privacy benefits. Finally, it discusses how Datastreams’ low-code platform can help store data in digital wallets in a GDPR-compliant manner.

The Evolution and Importance of Digital Wallets

Digital wallets began as simple financial tools but have evolved significantly with blockchain technology. Initially, crypto wallets provided secure environments for blockchain transactions, essential for managing cryptocurrencies and laying the groundwork for universal wallets.

Universal wallets extend crypto wallet capabilities to manage a wide array of digital assets and credentials, including identity cards, passports, and reputation scores. This evolution addresses the need for secure and comprehensive digital identity management.

What Are Universal Wallets?

Universal wallets store cryptocurrencies and manage various digital identifiers and credentials. They offer secure management of a wide range of digital assets and identities, becoming essential in sectors from banking to government services. Key benefits include:

- Enhanced Security: Robust encryption protects digital assets and credentials, reducing identity theft and fraud risks.

- Privacy Protection: Users control their digital identities, ensuring personal information is shared only with trusted entities.

- Convenience and Efficiency: These wallets streamline digital asset management and transactions.

- Self-Sovereignty: Users control their personal information, aligning with trends toward decentralized digital identity management.

Universal wallets are crucial as more sectors adopt blockchain technology and seek secure digital identity solutions. However, the complexity of using blockchain technology with highly sensitive data, such as passports, raises concerns. This is because blockchain achieves security by saving records in a transparent but immutable manner. This conflicts with GDPR’s right to erasure (Article 17) and data minimization principle (Article 5(1)(c)), whereby the data controller should only limit the collection of personal information to what is necessary to accomplish a specific purpose.

The blockchain’s public ledger would mean that once real identities are verified, the record of that information will not be altered or deleted. As this article will discuss in the final section, the use of Datastreams is the solution to this problem. As the perfect intermediary, our low-code platform gives customers the freedom to design their privacy policy as they want, whilst also guaranteeing encryption.

The next part of the article examines the European Union’s digital identity wallet proposal, promoting privacy and self-sovereignty.

Analysing The EU Digital Identity Wallet Proposal

The European Union’s move towards a Digital Identity Wallet comes at a time when digitalization is rapidly transforming societies and economies. The COVID-19 pandemic significantly accelerated this, highlighting the urgent need for secure and efficient online identification and authentication methods. This shift in digital demand has underscored the limitations of existing systems and the need for a unified, secure digital identity framework across the EU.

The European Commission has dedicated €46 million from the Digital Europe Programme for four major pilot projects, launched on 1 April 2023, to evaluate the EU Digital Identity Wallet across various practical scenarios including mobile driving licenses, eHealth, digital payments, and verifying educational credentials. These tests are aimed at refining the wallet’s specifications. Additionally, the Commission is crafting a set of technical guidelines, a toolkit, which will be initially released on GitHub in February 2023, which will be updated continually and become mandatory following the completion of the legislative process.

The European Digital Identity Wallet proposal aims to create a secure, user-controlled platform for managing digital identities and credentials. This wallet will enable EU citizens to store and share personal information, such as identity cards, passports, and other credentials, securely and selectively. The initiative is part of the broader EU strategy to enhance digital infrastructure, ensuring that all citizens have access to trusted, interoperable digital identity solutions by 2030.

The EU Digital Identity Wallet significantly enhances user control over personal data by providing a secure, decentralized storage system. This innovation allows individuals to manage who accesses their data and when, reducing dependence on centralized systems that are more vulnerable to breaches and misuse. Privacy is a fundamental aspect of the wallet, which incorporates strong encryption and user consent mechanisms to ensure data is shared only with authorized entities. Additionally, a user dashboard enables monitoring of all data transactions, further securing personal privacy.

The wallet also promotes the concept of self-sovereign identity, empowering individuals to manage their digital identities without intermediaries. This approach aligns with the EU’s broader goals of enhancing digital sovereignty and user empowerment, fostering a decentralized and user-centric digital identity framework. Overall, the EU Digital Identity Wallet represents a significant step towards a more secure, private, and user-controlled digital identity ecosystem.

Secure Your Digital Wallets with Datastreams

Datastreams is a pioneering Regulation Technology company that specializes in guiding businesses through complex regulatory landscapes. It provides a low-code platform that bridges verified data sources, digital wallets, and data verification processes, ensuring compliance with European privacy standards.

Datastreams could act as an intermediary or broker between various entities involved in managing personal data, such as original verified sources, digital wallets, and data verification processes. In fact, our platform could integrate seamlessly with the EU Digital Identity Wallet initiative by ensuring secure data management in accordance with European standards.

By connecting with trusted data sources like healthcare providers and government databases, Datastreams can guarantee the authenticity and accuracy of information stored in digital wallets. This integration supports the EU’s goal of creating a unified, secure digital identity framework, enhancing privacy and self-sovereignty for EU citizens.

Datastreams guarantees data protection through several key features:

- Data Permission Management: The platform establishes and manages permissions, ensuring that personal data is accessed and used only by authorized entities. This includes robust consent management, enabling users to control how their data is shared and used.

- Secure Data Integration: By connecting with verified sources, Datastreams ensures that only authentic and accurate data is stored in digital wallets. This prevents identity theft and enhances the security of personal information.

- Compliance with GDPR: Datastreams’ platform adheres to GDPR requirements, ensuring that all data collection, storage, and sharing processes meet stringent European privacy standards. The platform’s compliance framework helps organizations mitigate legal and reputational risks.

- Self-Sovereign Identity: Datastreams empowers users by allowing them to store their data in digital wallets securely. Users maintain control over their personal information, aligning with the self-sovereign identity principles promoted by the EU Digital Identity Wallet.

These features of Datastreams are beneficial as they ensure data security, transparency, and regulatory compliance. Advanced encryption and cybersecurity protocols protect personal information, while robust consent management and data permission frameworks enhance user control. By integrating verified data sources and adhering to GDPR, Datastreams supports the EU’s vision for secure, self-sovereign digital identities. This cohesive approach not only builds trust among users but also ensures that businesses and individuals can navigate the digital landscape with confidence and security.

Feel free to contact me, and I will be more than happy to answer all of your questions.

Feel free to contact me, and I will be more than happy to answer all of your questions.